17/02/2021

Transformation

It’s time to move on from the four-hour Emergency Department standard

With the NHS consultation into how urgent care performance should be measured just closed, The PSC’s Transformation Team explains why it’s time for a fresh start.

The biggest vaccine roll-out ever to take place in Britain is underway, so the optimistic among us could be forgiven for starting to entertain thoughts of what the NHS might look like post-crisis.

Our Same Day Emergency Care (SDEC) roundtable in January underlined just how much has changed in recent months. From emergency departments (ED) to hospitals to how providers work together to secure the right care for patients – the urgent care system post-COVID will look fundamentally different in 2022 than it did in 2019.

If SDEC is already transforming urgent care to benefit both patient and provider, the way we measure performance, and assess how well the system is functioning, must change too.

The four-hour standard

Since 2004 we have relied on the 4-hour standard as the fundamental benchmark of performance. From 2004- 2010, the target was that at least 98% of people attending an ED should be seen, treated and admitted or discharged in under 4 hours. In 2010, the bar was lowered to 95%.

By this metric, policymakers and regulators have assessed how hospitals are performing in delivering urgent care to patients. It has acted as a powerful motivator for improvement: the 4-hour standard has been associated with reduced waiting times, crowding and mortality in EDs (according to The Royal College of Emergency Medicine), as well as better hospital bed management and access to investigations (see the British Medical Journal).

However, it is no longer fit for purpose and the NHS as a whole has not achieved the standard since June 2015.

Why isn’t it working?

There are two major failings of the four-hour rule to measure ED performance. First, reaching the standard involves much more than just EDs - the wider health and care system shares responsibility. Second, it is plagued with ‘gaming’ that often doesn’t benefit patient outcome.

When we say that the wider health and care system plays a role in reaching this standard, we’re talking about, for example, the inflow of patients to EDs that can be reduced through both preventative and alternative community-based services. Or we mean that supporting the outflow of patients from hospital into other settings helps with patient placement from the ED as it frees up valuable space.

We have seen this in our work at The PSC, and we know that to move the dial significantly on timely discharge from hospital, the availability of care provision and partnership-working with social care staff is crucial.

To speak briefly about ‘gaming’ - a well-known management theory says:

"What gets measured gets managed - even when it's pointless to measure and manage it, and even if it harms the purpose of the organisation to do so."

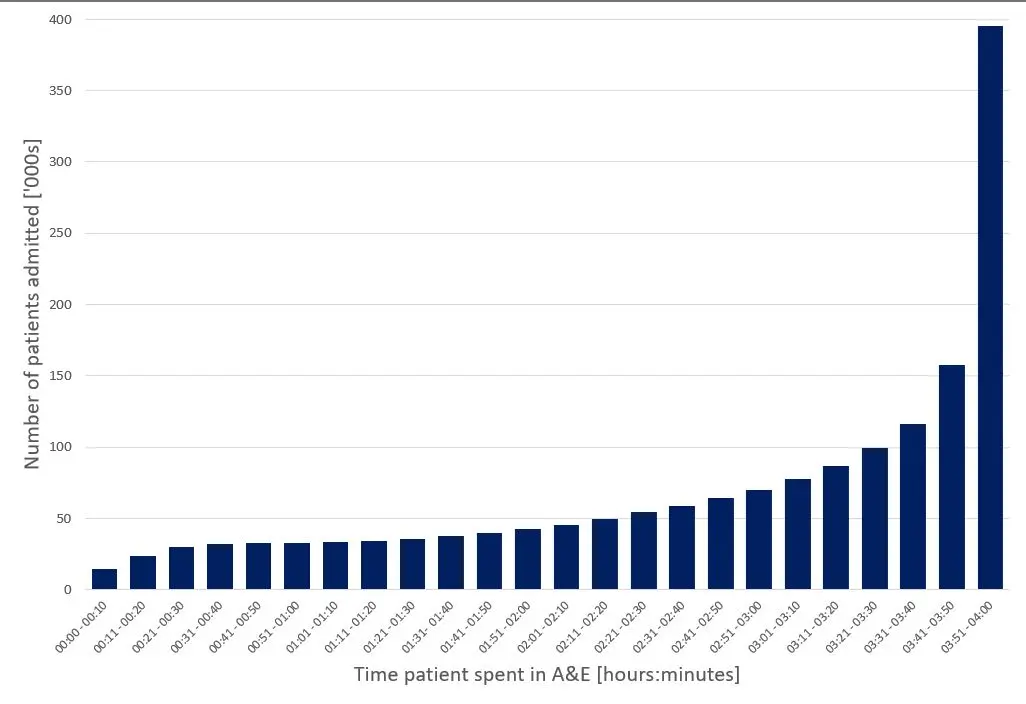

The 4-hour standard can incentivise non-constructive behaviour in this way. In cases where people attending ED are about to breach the 4-hour standard, according to the pass/fail system, it makes logical sense to prioritise them for quick action. This often means admission to a bed [see figure 1], or other (sometimes inappropriate) patient placement such that the patient is no longer counted in ED. This does not reflect a high-quality service – it can lead to actions which are ultimately not in the patient’s nor the hospital’s best interests, and potentially to the detriment of more acutely unwell patients who haven’t been waiting as long.

What is the proposed alternative?

The way we measure urgent care performance must offer incentives that work better for providers and for patients. NHSE has recently proposed a bundle of metrics that could be used instead of the 4-hour standard. It has chosen to focus on what is clinically meaningful and what patients report is important to them. With 10 metrics in total (pictured below), it focuses on multiple components of the urgent care system, promoting a whole-system view and collaborative system working.

Here it is explicitly recognised that what happens to patients in EDs is linked to what is happening in the wider system. For example, “patients spending >12 hours in ED” is classed as a whole-system metric. This is because it suggests wider system problems are preventing patients from being transferred to services more appropriate for their needs.

Is this new bundle the solution?

There’s a lot to be hopeful about in these new metrics. First, by following a patient journey to and through the urgent care system, it encourages more coordinated working between services. It also supports other transformational changes already happening in the system, for example the expansion of the clinical assessment service (CAS) within NHS 111. And since performance is assessed by viewing all the metrics together, the system is harder to manipulate.

But there are a few potential downsides. The flipside of the richer picture presented by multiple measures is that the new data will be much more difficult to interpret, especially by non-health experts. Adding to the nuance, it’s difficult for any set of metrics to fully take into account the variety of delivery models on the ground, meaning that comparisons need to be made extremely carefully.

A good example of where this could cause some confusion is in data on Urgent Treatment Centres (UTCs) which will be collected separately from this bundle. In some places UTCs are co-located with emergency departments meaning that UTCs can take patients eligible for ED, lowering the number of ED attends recorded. This could both reduce or raise the mean time spent in ED – lower attends could lead to patients being seen faster, or alternatively, less complex patients will be streamed away to UTCs, leaving more complex patients with a higher mean time in ED.

The bundle also isn’t “catch all” in other ways: for example, it doesn’t measure the effectiveness of key operational processes such as diagnostic turnaround times in ED. It is important that these aren’t forgotten and that hospitals continue to track these measures locally, as efficient processes are key drivers of the higher-level performance metrics. From a practical perspective though, requiring more metrics to be recorded will require more time from front-line staff, who might otherwise be interacting with patients. This could lead to some resistance.

Whichever metric or set of metrics is chosen to measure urgent care performance, it will not be perfect. The urgent care landscape and ways of working are fundamentally different now from even just a few years ago, and the transformative changes brought on by COVID such as clinicians beginning to consider SDEC by default is a powerful example of this.

We need to reflect on what has changed and what we have learned about how we monitor urgent care over recent months, and these metrics could well be a positive step towards that. What is clear, is that this is the perfect moment for a fresh start – let's take it.

This is part of our focus on SDEC - how it can make a difference and what we can do to implement it effectively. If you'd like to read more about our recent roundtable featuring experts across urgent care, see our blog.

Latest News & Insights.

The Next 20: The Future of Community Care

Laura Churchill joins Eli Bond to explore the future of community care,…

NHS Confed Expo 2026: Join our session

Joe Norbury will be joining system leaders at NHS Confed Expo to share practical…

The Next 20: Home First and the future of hospital discharge

Our latest episode of 'The Next 20' sees Chris Bradley speaks with Rachel Kemp…